How we perform our jobs and the choice of resources we use to inform our decisions are constantly changing.

Graduates will now use artificial intelligence (AI) tools in their future careers, and it needs to be embedded into curricula and linked to student graduate attributes.

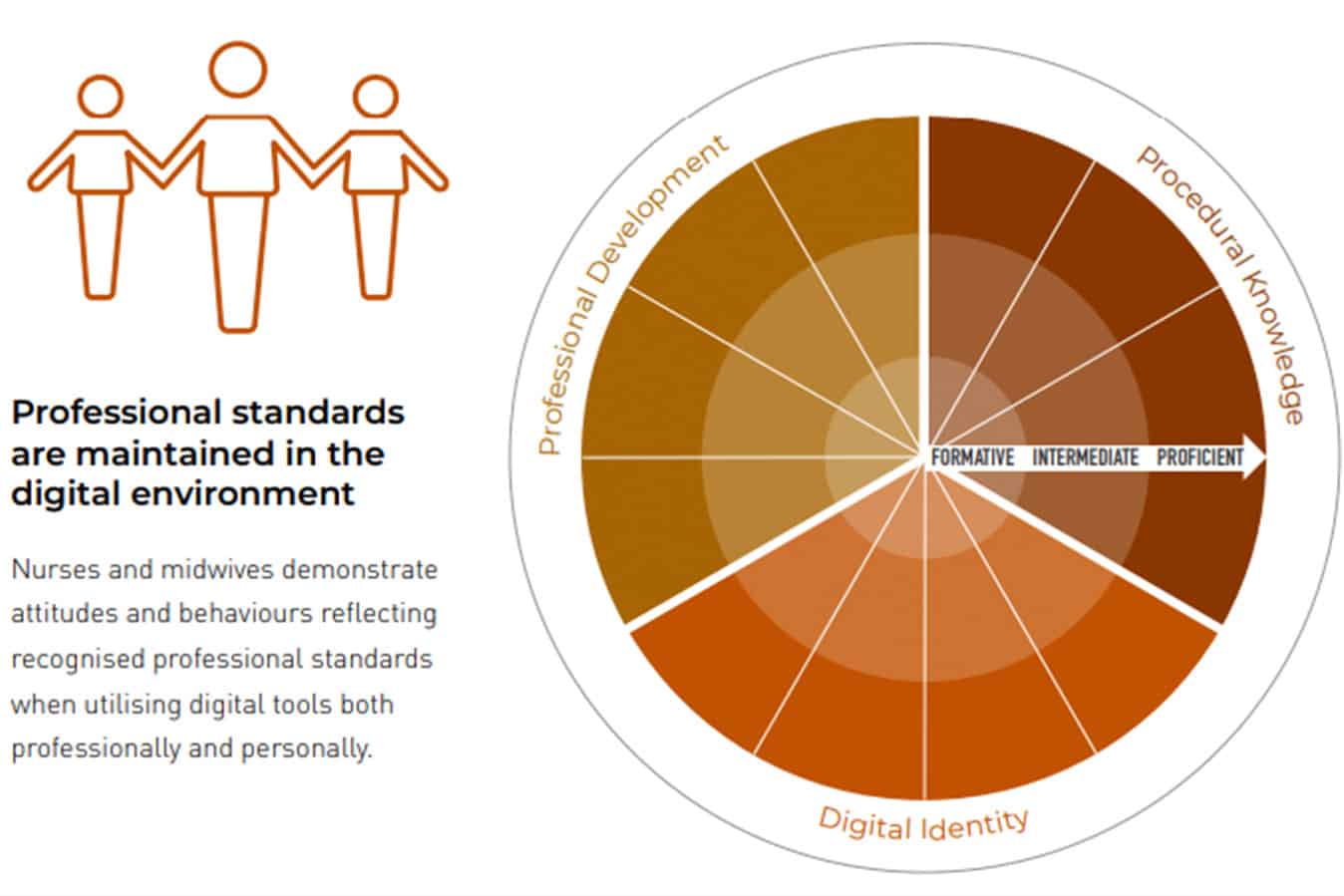

Consequently, as educators, we need to model safety and quality while using AI on campus, in clinical roles and in other professional environments. AI is a component of digital professionalism. Regardless of what we study, digital professionalism is required. Educators need to model and promote digital identity, prepare students for proficiency in digital literacy and be able to model digital professionalism, which is a component of Domain 1 of the National Nursing and Midwifery Digital Capability Framework1 (Figure 1).

Our educative experiences enable us to provide students with personalised, flexible, and adaptable learning experiences. When guided to use AI, students receive immediate feedback, which they have ‘shaped’ to inform their needs.

As educators, we influence and scaffold individual, team, and collective learning.2 AI can be a study buddy, tutor, and idea generator in classrooms. However, more importantly, it can improve nurse and midwifery graduate work readiness for safety and quality outcomes in healthcare environments. AI-influenced education is transforming and invigorating traditional teaching strategies and energising class discussion. This new way of working can invigorate online, distance on – and off-campus learning experiences for nursing and midwifery students. Students can engage in disciplinary knowledge through AI and connect that knowledge to skills and critical thinking applicable to their future workplaces.3

For some educators, returning to in-class tests to manage the use of AI and academic integrity may seem tempting. However, AI is a powerful opportunity to rethink and design assessments that focus on what students need to know to be successful in the future and prepare to become work-ready graduates.

As educators and health professionals, we have a responsibility to protect against issues that may arise from integrating AI into educational experiences. As we learn more about the potential of AI, we have become aware of new risks to safety and quality outcomes. AI can generate information that is inappropriate or incorrect information. AI can also be accompanied by emergent data privacy, security, and confidentiality risks. Data privacy and confidentiality breaches need careful educative strategies. Preparing graduates includes cautioning about placing sensitive, identifiable, or confidential information into AI tools where information can be harvested and reused, as it is a potential breach of digital professionalism. Furthermore, even though AI tools are trained on large amounts of data, bias can be introduced by those who build the tools, as developers choose which information is used to train AI algorithms. Other AI tools have limits, such as date ranges or the capacity to link data logically, as AI tools cannot rationalise or think logically as humans or clinicians can.4

While AI tools can augment learning and assist with efficient resource use, they will not replace nursing and nursing care. In this evolving world of AI, educators need to be vigilant and coach students to be digitally literate, so they can be work-ready at graduation to ensure safety and quality outcomes within healthcare environments.

References

1 Australian Digital Health Agency, 2020. National Nursing and Midwifery Digital Health Capability Framework. Australian Government: Sydney, NSW. National Nursing and Midwifery Digital Health Capability Framework, v1.0, available at nursing-midwifery.digitalhealth.gov.au.

2 Mather C, Douglas T, O’Brien J. Identifying opportunities to integrate digital professionalism into curriculum: A comparison of social media use by health profession students at an Australian University in 2013 and 2016. Informatics. 2017; 4(2):10. https://doi.org/10.3390/informatics4020010.

3 Douglas T, James A, Mather C, Reimagining the role of discussion boards to engage richly diverse students and improve graduate success, Australia-ASEAN Academics Forum, June 2023.

4 Zhang A. https://www.hrmonline.com.au/data-protection/three-legal-risks-chatgpt-work/ (Accessed 21 July 2023).

Authors:

Dr Carey Mather RN BSc G.Cert ULT G.Cert Creative Media Tech, G.Cert Research P.Grad Dip Hlth Prom, MPH, PhD, MACN, FAIDH, FHEA, Senior Lecturer, Australian Institute of Health Service Management, University of Tasmania, Launceston, Tasmania, Australia.

Allison James B.Bus (Dist) (UCCQ), MBA (CQU), Grad Dip FET (USQ). Senior Lecturer, Australian Maritime College, Centre for Maritime & Logistics Management, University of Tasmania, Launceston, Tasmania, Australia.

Tracy Douglas SFHEA, BSc (Hons), MMEdSc, GradCertULT. Senior lecturer, School of Health Sciences, University of Tasmania, Launceston, Tasmania, Australia.